🧱 GlusterFS: Why It Was a Perfect Fit for SMBs and HomeLabs

For many years, GlusterFS filled a very specific and important niche:

distributed storage for small and medium-sized environments that did not have enterprise budgets, datacenter-class hardware, or massive network bandwidth.

It was never perfect. But it was practical. And in many environments, it was exactly the right tool.

With its deprecation and uncertain future, a gap has emerged – one that is currently not well served by existing alternatives.

This article explains:

- why GlusterFS worked so well for SMBs and HomeLabs

- why current alternatives fall short in these environments

- and why a new approach like QuorumBD is necessary

🧩 Why GlusterFS Worked So Well

GlusterFS succeeded not because it was cutting-edge, but because it was balanced.

What it did right:

- worked on consumer hardware

- tolerated low network bandwidth

- scaled reasonably from 3–10 nodes

- required no specialized hardware (like enterprise SSDs)

- was understandable and debuggable by humans

- integrated cleanly with Linux tools and filesystems

For HomeLabs and SMBs, this mattered more than raw performance or theoretical consistency guarantees.

A few practical examples:

- 1 GbE networks instead of 10–100 GbE

- SATA or mixed (consumer) SATA/NVMe instead of full enterprise NVMe fleets

- heterogeneous nodes instead of identical servers

- admins who also do networking, backups, and security – not full-time storage engineers

GlusterFS allowed all of that.

⚠️ The Problem After GlusterFS

With GlusterFS effectively discontinued, many users went looking for replacements.

What they found was frustrating:

most modern distributed storage systems assume enterprise conditions by default.

The result is a widening gap between:

- what SMBs & HomeLabs have

- and what modern storage systems expect

🧪 Comparing the Existing Alternatives

Below is a pragmatic comparison from the perspective of small clusters and non-enterprise environments.

🐙 Ceph (RBD / CephFS)

Ceph is powerful. No question.

But power comes at a cost.

Challenges for SMBs & HomeLabs:

- high operational complexity

- strong assumption of fast networks (10 GbE+)

- significant background traffic

- heavy memory and CPU usage

- painful to debug when things go wrong

- scaling down is not its strength

- enterprise hardware (like SSDs)

Ceph shines in large, homogeneous clusters with dedicated storage teams.

For a 3–6 node HomeLab on 1 GbE? It often feels like using a jet engine to power a bicycle.

💽 LINBIT DRBD / DRBD9

DRBD is solid and well-engineered.

But conceptually, it is replication, not distributed storage.

Limitations:

- typically active/passive or carefully managed active/active

- not object-based or scale-out in nature

- split-brain handling is complex and stressful

- scaling beyond a few nodes becomes operationally difficult

DRBD works very well for HA pairs.

It does not replace what GlusterFS offered as a scale-out filesystem.

🧊 Other Solutions (Longhorn, MooseFS, etc.)

Many alternative systems exist, but they usually fall into one of these categories:

- Kubernetes-centric and complex

- optimized for cloud-native workloads

- poorly documented edge cases

- assume fast networks and SSD-only storage

Again: not bad systems — just not designed for the GlusterFS audience.

🔍 The Core Problem Nobody Solves

The real issue is not technology.

It is assumptions.

Most modern distributed storage systems assume:

- high-bandwidth, low-latency networks

- identical nodes

- significant RAM and CPU headroom

- tolerance for operational complexity

SMBs and HomeLabs assume:

- 1 GbE networks (maybe 2.5 GbE at best)

- mixed hardware

- limited power budgets

- administrators who value predictability and clarity over raw throughput

GlusterFS aligned with the second group.

Nothing truly replaces that today.

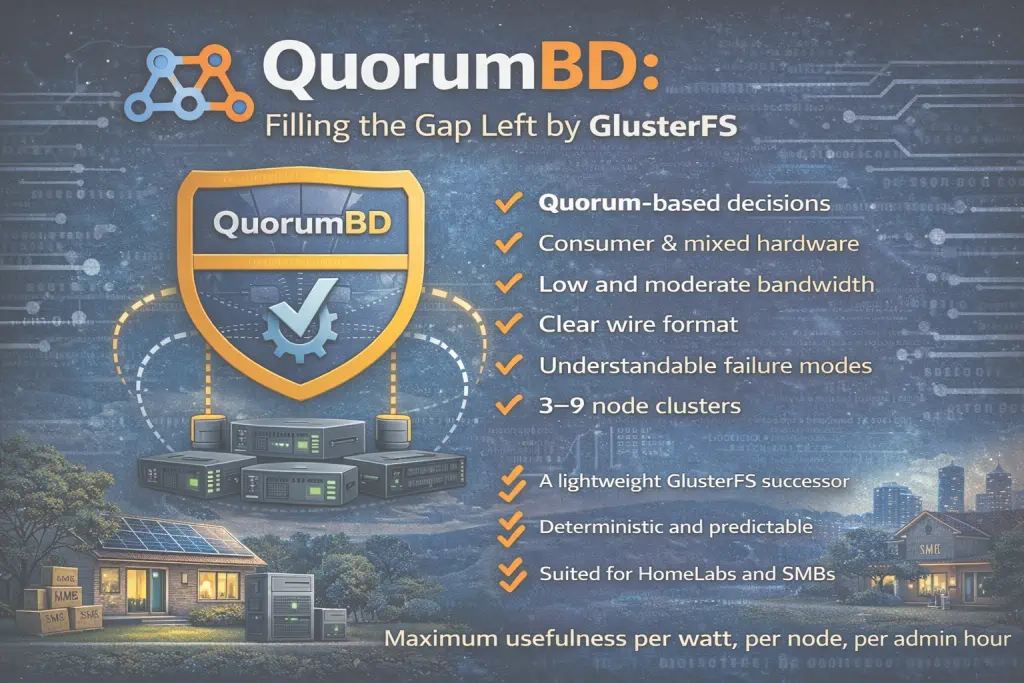

🧠 Why QuorumBD Needs to Exist

QuorumBD is being developed specifically to fill this gap.

Not as a “Ceph killer”.

Not as an enterprise storage platform.

But as a lightweigthed GlusterFS successor in spirit, designed from the ground up for:

- small clusters (3–9 nodes)

- low and moderate bandwidth networks

- consumer and mixed hardware

- deterministic behavior

- understandable failure modes

- clear, documented wire formats

- strong consistency through quorum, not chatter

The goal is a performance at lease like GlusterFS even under heavy load.

The goal is maximum usefulness per watt, per node, per admin hour.

🔧 Design Philosophy (In a Nutshell)

QuorumBD is built around a few core ideas:

- quorum-based decisions instead of silent split-brain

- minimal background traffic

- predictable state machines

- explicit failure handling

- boring, transparent engineering over magic

- suitability for e.g. Proxmox clusters and HomeLabs

In other words:

a system that respects real-world constraints, not ideal lab conditions.

🌱 Looking Forward

The disappearance of GlusterFS leaves many users stranded:

- too small for Ceph

- too distributed for DRBD

- too practical for cloud-only tools

QuorumBD is currently developed because that space still matters.

Not everyone has a datacenter.

Not everyone wants Kubernetes everywhere.

And not everyone should be forced into enterprise assumptions just to get reliable shared storage.

Sometimes, boring, honest, and well-scoped engineering is exactly what is missing.